In Kubernetes and other containerized environments, an Ingress controller acts as a specialised kind of load balancer. When it comes to managing containerized applications, Kubernetes is the gold standard. Many companies face additional challenges and problems with application traffic management when they move production workloads onto Kubernetes. An Ingress controller mediates between in-house Kubernetes services and externally offered ones, and it also handles the complex routeing of traffic for applications.

Containerization and microservices have grown in popularity as a means of keeping up with the rising demand for applications. However, considerable difficulties relating to network orchestration must be overcome before these trends can be properly implemented. One of the trickiest parts of building cloud-native applications is understanding how to utilise Kubernetes ingress controller strategically. It’s not the only crucial part, but it certainly helps.

What You Should Know About the ingress controller

You should know how important networking is to the development platform operations before digging into ingress controllers. It is common custom for development teams to provide backend API services, which other applications and users may connect to. Implementations of container environments on local development workstations are often used by teams in the early phases of software development. Docker Compose and comparable local orchestrators simplify access to these machines so that container invocations may be done directly.

These direct-access stopgaps are insufficient when it comes time to go to a shared development or staging environment and match the setup that will be utilised in production. Many times, access patterns presume reliable access, which cannot be assured in production, or they depend on static variables that are prone to change in a cloud environment. You cannot consider any of these to be givens.

Kubernetes Ingress Controllers

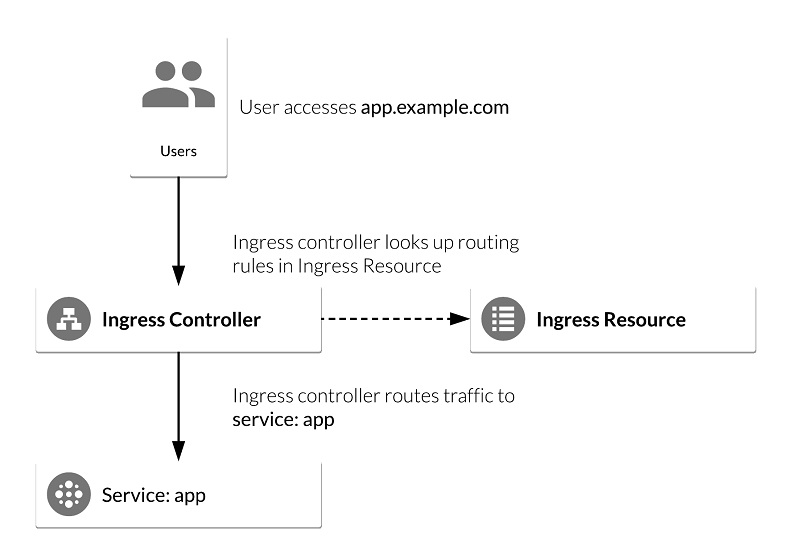

Accepting traffic from outside the Kubernetes platform allows you to load balance it to pods (containers) running within the platform.

Services that need to communicate with other services outside of a cluster may have their outbound traffic from the cluster controlled.

Objects named “Ingress Resources” that have been prepared for deployment using the Kubernetes API.

If any pods are added or removed from a service while Kubernetes is running, the load-balancing rules will be changed immediately.

If you’re new to Kubernetes networking, this session may help you get up to speed on the basics, including the role of the Ingress controller. Ingress controllers may be found in three primary categories: open source, cloud-vendor default, and commercial. You will be given advice on how to choose the best one for your purposes.

Additional features that set Kubernetes apart include automatic bin packing and self-healing

In the first case, the user must provide the platform with a set of nodes to utilise for running containerized tasks. To ensure the most efficient use of available resources, Kubernetes will construct containers on the designated nodes based on the provided CPU and memory requirements.

Conclusion

The second case may be handled by the framework’s ability to restart malfunctioning containers, kill containers that don’t pass a user-defined health check, swap out containers as needed, and conceal containers from clients until they’re ready to be utilised.